Artificial Intelligence in Ophthalmology

All content on Eyewiki is protected by copyright law and the Terms of Service. This content may not be reproduced, copied, or put into any artificial intelligence program, including large language and generative AI models, without permission from the Academy.

Artificial Intelligence in Ophthalmology

Definition

Artificial Intelligence (AI), a term introduced in the 1950s, refers to software that can mimic cognitive functions such as learning and problem solving.[1] It makes it possible for machines to learn from experience and adjust to new inputs. AI machines can be used in computational systems to analyse ocular images, clinical data, and patient-specific information for screening, diagnosis, risk prediction, treatment planning, workflow optimization, and clinical decision support.[1] It has numerous applications in several fields of ophthalmology.[2]

Historical Evolution of AI in Ophthalmology

- Artificial intelligence in ophthalmology has evolved from rule-based systems and classical machine learning to deep learning, autonomous screening, and foundation models. Early systems used predefined rules or handcrafted image features to identify retinal lesions, optic disc changes, or vascular abnormalities.

- The deep learning era, driven by convolutional neural networks, greatly improved image-based detection of diabetic retinopathy, glaucoma, age-related macular degeneration, and retinopathy of prematurity[3].

- A major clinical milestone occurred in 2018, when IDx-DR, now LumineticsCore, became the first FDA-authorized autonomous AI system for diabetic retinopathy screening.[4][5]

- Since 2023, newer approaches such as RETFound, vision-language models, and large language models have expanded ophthalmic AI toward multimodal diagnosis, clinical decision support, documentation, triage, and patient education.[6][7]

Table 1. Evolution of AI in Ophthalmology

| Era | AI stage | Main method | Main input | Typical output | Ophthalmic example |

| 1950-1980s | Rule-based AI | Human-coded rules | Hand-selected image features | Feature present/absent | Early lesion detectors |

| 1990s-2000s | Classical Machine Learning | Feature extraction + classifier | Fundus/OCT/clinical variables | Diagnosis or risk score | Glaucoma classifier |

| 2010s | Deep learning | Convolutional neural networks trained on images | Raw images | Classification/segmentation | DR or AMD detection |

| 2018 onwards | Clinical Deployment : Autonomous AI | Validated locked algorithm | Retinal photographs | Refer/no-refer output | DR screening |

| 2023 onwards | Foundation models | Large-scale self-supervised pretraining | Massive ophthalmic datasets | Adaptable representation | RETFound |

| 2024-2026 | Multimodal AI | Image + text + structured data | OCT, Fundus, VF, EHR | Integrated prediction | Glaucoma progression |

| Generative AI/Large Language Models(LLMs) | Language or image-text models | Text± images | Explanation, summary, triage support | Ophthalmic chatbot/report drafting | |

| AI agents | LLM + tools/workflow | Multiple clinical inputs | Task completion | Triage + interpretation + referral draft |

Core Artificial Intelligence Concepts for Ophthalmologists

- As ophthalmologists, it is not required to master the mathematics of AI, but should understand the basic types of AI tools, how they are trained, and where they can fail.

- Therefore, working knowledge of rule-based systems, machine learning, deep learning, foundation models, multimodal AI, large language models, and AI agents helps clinicians use these tools safely for screening, diagnosis, monitoring, documentation, and decision support. The key terminologies and their simplified meaning are summarised in Table 2.

Table 2. Key terminologies

| Concept | Simplified meaning | Ophthalmology example | Key limitation |

| Rule-based AI | Uses predefined human-written rules | Detecting lesions based on programmed thresholds | Rigid; poor generalization |

| Machine learning | Learns patterns from data/features | Glaucoma classification using optic disc parameters | Depends on quality of selected features |

| Supervised learning | Learns from labeled examples | Fundus images labeled as DR/no DR | Requires accurate ground truth |

| Unsupervised learning | Finds hidden patterns without labels | Clustering OCT or fundus phenotypes | Clusters may lack clinical meaning |

| Deep learning | Uses multilayer neural networks to learn complex patterns | DR, AMD, ROP, glaucoma detection | Needs large datasets; black-box issue |

| Convolutional neural network | Deep learning model optimized for images | OCT or fundus photograph classification | Sensitive to image quality/artifacts |

| Segmentation model | Identifies and outlines structures | Retinal layers, optic disc/cup, fluid on OCT | Errors with poor scans or abnormal anatomy |

| Foundation model | Large pretrained model adaptable to many tasks | RETFound for retinal image analysis | Needs external clinical validation |

| Multimodal AI | Combines different data types | Fundus + OCT + visual field + EHR data | Complex integration and validation |

| Large language model | AI model that processes/generates text | Patient education, referral letters, summaries | Can hallucinate or sound overconfident |

| Vision-language model | Combines image and text understanding | Explaining OCT/fundus findings with clinical text | Early-stage; needs safety testing |

| AI agent | Uses AI plus tools to complete workflows | Triage + image analysis + report drafting | Needs strict human oversight |

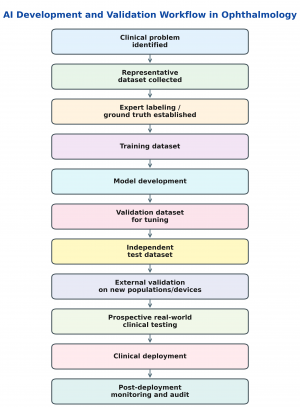

AI Development and Validation Workflow

AI tools in ophthalmology should be developed around a clearly defined clinical problem, such as diabetic retinopathy screening, OCT fluid detection, glaucoma progression prediction, or visual field interpretation.

The process follows the undermentioned pathway (Figure 1):

- Collection of high-quality and representative data,

- Expert labeling to establish the reference standard or “ground truth.”

- The dataset is then divided into :

- Training,

- Validation, and

- Testing sets.

- The model learns from the training data, is tuned using validation data, and is finally evaluated on an independent test set.

- For clinical use, internal testing alone is insufficient. The model should undergo external validation on data from different populations, devices, clinics, and disease severities to assess generalizability. Ideally, the algorithm should also be prospectively tested in a real-world clinical workflow before deployment.

- After implementation, continued monitoring is needed to detect performance drift, bias, safety issues, and changes in accuracy over time.

Limitations and Sources of Error

- Although AI has shown strong performance in several ophthalmic imaging tasks, its accuracy depends heavily on the quality, diversity, and relevance of the data used for training and validation.

- Poor image quality, media opacity, segmentation errors, device-specific artefacts, high myopia, atypical anatomy, rare disease phenotypes, and underrepresented populations can all reduce model performance.

- AI systems may also fail when applied outside their intended setting, such as a different camera, OCT device, ethnic group, disease severity, or clinical workflow.

- In addition, many deep learning systems function as “black boxes,” making it difficult for clinicians to understand why a particular output was generated.

- Generative AI and large language models introduce further risks, including hallucinated information, inaccurate references, overconfident responses, privacy concerns, and automation bias.

A summary of common sources of error is presented in Table 3.

Table 3. Common AI failure modes

| Failure mode | Ophthalmic example | Practical mitigation |

| Poor image quality | Cataract/media opacity causing false negative DR screen | Automated quality check + human review |

| Dataset bias | Algorithm trained mainly on one ethnicity or camera type | Diverse external validation |

| Device dependence | Model works on one fundus camera but not another | Camera-specific validation |

| High myopia/artifacts | False glaucoma classification from tilted disc | Clinician review and multimodal confirmation |

| Rare phenotype | AI misses uncommon retinal dystrophy or uveitis | Avoid autonomous use outside validated scope |

| Automation bias | Clinician over-trusts AI output | Human-in-the-loop workflow |

| Hallucination | LLM invents diagnosis or reference | Retrieval + citation verification |

| Data drift | Accuracy falls after deployment in new population | Post-deployment monitoring |

Method to appraise Ophthalmic AI Studies

- Ophthalmic AI studies should be interpreted by asking whether the algorithm solves a clinically meaningful problem and whether its performance is likely to generalize beyond the dataset on which it was developed.

- Important points include the source and diversity of the dataset, quality of image acquisition, method of expert labeling or ground-truth diagnosis, separation of training, validation, and test sets, use of external validation, and comparison with human experts or current standard of care.

- Studies should also report clinically relevant outcomes such as sensitivity, specificity, area under the receiver operating characteristic curve, false-negative rate, referral accuracy, image ungradability, workflow impact, and safety. Checklist for appraising such studies is highlighted in Table 4.

Table 4. Checklist for appraising ophthalmic AI studies

| Question | Why it matters |

| What clinical problem is being solved? | Prevents technology-first research |

| What is the input? | Fundus photo, OCT, VF, AS-OCT, EHR, or text |

| What is the reference standard? | Determines ground-truth reliability |

| Was external validation performed? | Tests generalizability |

| Was the dataset diverse? | Reduces bias |

| Were poor-quality images handled? | Prevents false reassurance |

| Was performance compared with clinicians? | Shows clinical relevance |

| Was the model tested prospectively? | Stronger than retrospective validation |

| Is the tool autonomous or assistive? | Affects liability and deployment |

| Is there post-deployment monitoring? | Detects model drift |

| Are limitations clearly stated? | Prevents overclaiming |

Clinical Applications of AI in Ophthalmology

- Artificial intelligence has been applied across nearly every ophthalmic subspecialty, with the strongest evidence in image-based screening, classification, segmentation, and risk prediction.

- The most mature clinical use is in diabetic retinopathy screening, while other established or near-clinical applications include OCT-based retinal disease analysis, glaucoma assessment, retinopathy of prematurity evaluation, cataract grading, intraocular lens calculation, and corneal imaging.

- Emerging applications include multimodal disease prediction, infectious keratitis classification, surgical video analysis, ocular oncology, neuro-ophthalmology, oculoplastics, teleophthalmology, and AI-assisted care delivery in low-resource settings.

- Recent reviews emphasize that ophthalmic AI is moving from single-task image classifiers toward multimodal models and foundation models that can integrate images, clinical text, and structured patient data[8].

Cataract, Biometry, and Refractive Surgery

- AI in cataract and refractive surgery may assist in cataract detection and grading, IOL power calculation, toric IOL planning, posterior capsule opacification risk prediction, refractive surgery screening, surgical video analysis, and complication prediction.(Table 5)

- Machine learning and newer AI-based tools are especially relevant for refractive prediction, complex biometry, post-refractive surgery eyes, and personalized IOL selection.[9][10]

- "Touch Surgery Enterprise" is an intraoperative AI device that can assist in data analysis and play a a key role in surgical training.(https://www.medtronic.com/en-us/healthcare-professionals/specialties/touch-surgery.html)

Table 5: AI in cataract, biometry, and refractive surgery

| Use | Input | Output |

| Cataract grading | Slit-lamp/retroillumination images | Severity grade |

| IOL calculation | Biometry data | Predicted IOL power |

| Congenital cataract | Slit-lamp images | Diagnosis/severity |

| PCO prediction | Surgical and clinical variables | Risk score |

| Refractive screening | Tomography/topography | Ectasia risk |

Retina

- Retina is the most extensively studied area of ophthalmic AI because retinal diseases are commonly evaluated using fundus photographs, OCT, OCTA, fluorescein angiography, fundus autofluorescence, and widefield imaging.

- AI models have been developed for diabetic retinopathy, diabetic macular edema, age-related macular degeneration, retinopathy of prematurity, retinal vascular disorders, and treatment-response prediction.

- The strongest real-world clinical translation has occurred in diabetic retinopathy screening, while OCT-based AI is increasingly used for fluid segmentation and quantitative retinal disease monitoring

i) Diabetic Retinopathy and Diabetic Macular Edema

- AI systems can analyze fundus photographs to identify more-than-mild diabetic retinopathy, referable diabetic retinopathy, or vision-threatening diabetic retinopathy.

- OCT-based AI can also assist in detecting and quantifying diabetic macular edema, retinal fluid, and treatment-response biomarkers.

- Newer reviews describe AI applications in DR/DME detection, staging, OCT analysis, fluid quantification, and treatment-response prediction(Table 6).[11]

Table 6: FDA-cleared/autonomous AI systems for diabetic retinopathy screening

| System | Status/use | Input | Output |

| LumineticsCore / IDx-DR | Autonomous DR screening; first FDA De Novo-cleared autonomous AI diagnostic system for DR | Fundus photographs | More-than-mild DR vs no more-than-mild DR |

| EyeArt | FDA-cleared autonomous DR screening | Fundus photographs | mtmDR/vtDR detection |

| AEYE-DS / Aurora handheld implementation | Portable/handheld autonomous AI clearance reported in 2024 | Handheld fundus images | Referable DR detection |

ii) Age-Related Macular Degeneration

- AI has been used in AMD to detect referable disease, classify disease stage, segment intraretinal and subretinal fluid, quantify drusen and geographic atrophy, and predict progression to advanced AMD.

- In neovascular AMD, OCT-based segmentation may support anti-VEGF treatment monitoring by quantifying intraretinal fluid, subretinal fluid, pigment epithelial detachment, and other biomarkers.

- Foundation models and multimodal AI may further improve integration of fundus images, OCT, OCTA, fundus autofluorescence, and clinical data[12].(Table 7)

Table 7: AI tasks in AMD

| AI task in AMD | Clinical relevance |

| Detect referable AMD | Screening and triage |

| Segment intraretinal/subretinal fluid | Anti-VEGF monitoring |

| Quantify drusen and atrophy | Risk stratification |

| Predict conversion to advanced AMD | Follow-up planning |

| Predict treatment response | Personalized therapy |

| Analyze multimodal imaging | Fundus + OCT + FAF + OCTA integration |

iii) Retinopathy of Prematurity

- AI in ROP focuses on vessel segmentation, quantification of vascular tortuosity and dilation, and automated detection of plus or pre-plus disease.

- These tools may improve consistency in ROP grading, support tele-ROP programs, and help prioritize infants requiring specialist review.(Table 8)

Table 8 : AI in ROP

| AI tool/concept | What it evaluates | Clinical use |

| i-ROP | Plus/pre-plus disease severity | Quantitative severity scoring |

| ROPtool | Vessel tortuosity/dilation | Plus disease support |

| RISA | Vascular tortuosity | Plus disease analysis |

| VesselMap | Vessel width/tortuosity | Automated vascular assessment |

| CAIAR | Arteriole tortuosity and venular width | Plus disease quantification |

Cornea and Anterior Segment

- AI has emerging applications in corneal and anterior segment disease, more so in keratoconus detection, corneal tomography analysis, infectious keratitis classification, anterior segment OCT interpretation, cataract assessment, and dry eye or meibography analysis.(Table 9)

- These applications are clinically attractive because many anterior segment disorders require pattern recognition across slit-lamp photographs, Scheimpflug tomography, AS-OCT, topography, and meibography.[13]

Table 9: AI in Cornea

| Condition | AI input | Potential output |

| Keratoconus | Topography/tomography | Early ectasia detection, progression risk |

| Infectious keratitis | Slit-lamp photograph, AS-OCT | Bacterial/fungal/Acanthamoeba probability |

| Dry eye | Meibography, tear-film images | Meibomian gland dropout grading |

| Angle closure | AS-OCT | Angle segmentation and closure risk |

| Corneal opacity | Slit-lamp/AS-OCT | Opacity depth and surgical planning |

Glaucoma

- AI has been studied in glaucoma using fundus photography, OCT, OCTA, visual fields, intraocular pressure data, and multimodal clinical datasets.(Table 10)

- Applications include optic disc and cup segmentation, RNFL/GCL analysis, visual field defect classification, progression prediction, and risk stratification.

- Recent reviews highlight AI’s potential in early glaucoma detection using fundus photographs and OCT, as well as disease progression prediction using CNNs, recurrent neural networks, transformer models, autoencoders, and multimodal models.[14]

- AI has been used for the evaluation of data from Sensimed Triggerfish (Sensimed AG, Lausanne, Switzerland), which is a contact lens-based continuous IOP monitoring device that actually measures only the corneal strain changes due to IOP fluctuations. [15]

Table 10 : AI in Glaucoma

| AI input | Use in glaucoma | Strength | Limitation |

| Fundus photo | Cup/disc, rim, RNFL defect detection | Scalable screening | Myopia/disc size confounding |

| OCT RNFL/GCL | Segmentation and classification | Objective structural data | Segmentation artifacts |

| OCTA | Vessel density/perfusion | Emerging biomarker | Artifacts, normative uncertainty |

| Visual field | Defect detection and progression | Functional relevance | Variability and learning effect |

| Multimodal data | Risk/progression prediction | Closer to clinical reasoning | Complex validation needed |

Pediatric Ophthalmology and Strabismus

- AI can support pediatric screening for ROP, amblyopia risk factors, strabismus, pediatric cataract, refractive error, and myopia progression.[16] [17][18][19](Table 11)

- Pediatric ophthalmology is especially suitable for AI-assisted screening because early detection can prevent amblyopia and irreversible visual impairment, while access to specialists may be limited in many settings.

Table 11. AI in Pediatric Ophthalmology

| Pediatric use | AI input | Clinical relevance |

| Strabismus | External eye photographs | Screening/referral |

| Amblyopia risk | Red reflex/photorefraction | Early detection |

| Pediatric cataract | Slit-lamp photographs | Severity assessment |

| Myopia | Refraction, axial length, age | Progression prediction |

| ROP | Widefield retinal imaging | Plus disease scoring |

Ocular Oncology

- AI has potential in ocular oncology for lesion detection, tumor classification, margin assessment, prognostication, and treatment-response prediction. (Table 12)[20]

- Applications include ocular surface squamous neoplasia, choroidal melanoma, retinoblastoma, basal cell carcinoma, and eyelid tumours.

- These tools remain largely assistive and require careful validation because false-negative results in ocular oncology may delay diagnosis of vision- or life-threatening disease.

Table 12 : AI in Ocular Oncology

| Tumor area | AI application |

| OSSN | Lesion detection and margin demarcation |

| Choroidal melanoma | Prognosis and metastasis risk prediction |

| Basal cell carcinoma | Reconstruction planning |

| Retinoblastoma | Imaging classification and treatment response |

| Eyelid tumors | Referral and malignancy risk stratification |

Oculoplastics

- In oculoplastics, AI can analyse facial photographs, external eye images, CT scans, and surgical photographs to support eyelid measurements, ptosis grading, thyroid eye disease assessment, facial asymmetry analysis, postoperative outcome tracking, and referral triage.[21](Table 13)

- These applications may improve objectivity and reproducibility in clinical documentation and surgical planning.

Table 13: AI in Oculoplastics

| AI task | Input | Use |

| Eyelid measurements | Facial photographs | MRD1, palpebral fissure, asymmetry |

| Blepharoptosis triage | External photographs | Referral decision support |

| Postoperative assessment | Serial photographs | Objective outcome tracking |

| Thyroid eye disease | Photographs/CT | Severity and activity support |

| Facial palsy | Video/photographs | Blink and symmetry assessment |

Neuro-ophthalmology

- AI in neuro-ophthalmology has been studied for papilledema detection, optic disc edema classification, differentiation of glaucomatous from non-glaucomatous optic neuropathy, visual field pattern recognition, and research into space-flight- associated neuro-ocular syndrome.[22][23][24][25](Table 14)

- As neuro-ophthalmic disorders can be sight-or life-threatening, these systems should be used for triage and decision support rather than as standalone diagnostic tools.

Table 14 : AI in Neuro-ophthalmology

| Neuro-ophthalmic problem | AI input | Potential use |

| Papilledema | Fundus photographs | Urgent detection/triage |

| AION/NAION | Fundus/OCT/VF | Optic neuropathy classification |

| GON vs NGON | Disc photo/OCT/VF | Referral triage |

| Visual field defects | Automated perimetry | Pattern classification |

| SANS | OCT/fundus imaging | Research and monitoring |

Surgical AI and Robotic Ophthalmology

- AI is increasingly being explored for surgical video analysis, instrument tracking, phase recognition, complication prediction, surgical skill assessment, and robotic assistance.[26][27](Table 15)

- In cataract and vitreoretinal surgery, AI may help standardise surgical training, identify high-risk steps, and support postoperative quality improvement.

- These systems remain largely assistive and research-based, and their clinical value depends on prospective validation and integration into the surgical workflow.

Table 15 : Surgical AI Tasks

| Surgical AI task | Example use |

| Phase recognition | Identify capsulorhexis, phacoemulsification, IOL insertion |

| Instrument tracking | Analyze instrument-tissue interaction |

| Complication prediction | Estimate posterior capsule rupture risk |

| Skill assessment | Objective surgical training feedback |

| Robotic assistance | Tremor reduction and precision maneuvers |

Public Health, Teleophthalmology, and Low-Resource Settings

- AI may expand access to eye care by enabling screening and triage outside specialist clinics.

- This is most advanced in diabetic retinopathy screening but may extend to ROP, glaucoma, cataract, and anterior segment disease.

- In low-resource settings, AI can support task-shifting, mobile screening units, teleophthalmology programs, and prioritization of patients requiring specialist review.[28]

- Safe deployment requires validation in the local population, attention to image quality, clear referral pathways, data privacy safeguards, and post-deployment performance monitoring.

Regulatory Approval and Clinical Deployment

- Regulatory approval is essential before an ophthalmic AI system can be used as a clinical device, especially when it influences diagnosis, referral, or treatment decisions.(Table 16)

- AI tools may function as assistive systems, where the clinician interprets the output, or as autonomous systems, where the device generates a clinical decision without specialist interpretation.

- The most mature example in ophthalmology is autonomous diabetic retinopathy screening, with FDA-cleared systems such as LumineticsCore, EyeArt, and AEYE-DS used to detect referable diabetic retinopathy in selected care settings[5][29].

- The U.S. FDA also maintains a public list of AI-enabled medical devices to improve transparency regarding authorized AI technologies.[1]

Table 16: Regulatory concepts in Ophthalmic AI

| Regulatory concept | Meaning | Why it matters |

| Software as a Medical Device | Software used for a medical purpose | AI may require device-style regulation |

| Autonomous AI | Produces result without specialist interpretation | Higher validation burden |

| Assistive AI | Supports clinician decision-making | Clinician remains central |

| Locked algorithm | Fixed after approval | Predictable performance |

| Adaptive algorithm | May change with new data | Needs lifecycle monitoring |

| Post-market surveillance | Monitoring after deployment | Detects drift and safety issues |

Ethical, Legal and Social Considerations

- The use of AI in ophthalmology raises ethical and legal concerns related to privacy, bias, explainability, accountability, equity, and patient trust[30].

- Ophthalmic AI systems often require large datasets containing fundus images, OCT scans, visual fields, and clinical information; therefore, secure data storage, de-identification, informed consent, and governance are important.

- Bias may occur when training datasets underrepresent certain age groups, ethnicities, ocular phenotypes, image devices, or disease severities. Such bias can reduce accuracy in real-world populations and worsen healthcare disparities if not addressed through diverse datasets and external validation[31][32].

- Another major issue is explainability. Many deep learning systems provide accurate outputs but limited insight into how the decision was made. This can make it difficult for clinicians to know when to trust or override an AI result.

- Generative AI and large language models introduce additional risks, including non-existent information, fabricated references, overconfident responses and automation bias.[33]

- Therefore, ophthalmic AI should be deployed as a human-centred tool, with clear responsibility for final clinical decisions, clinician verification, and transparent communication with patients. Ethical risks and mitigation strategies are enlisted in Table 17.

Table 17 : Ethical risks and mitigation strategies

| Risk | Example | Mitigation |

| Privacy breach | Fundus/OCT/EHR data leakage | Encryption, de-identification, access control |

| Bias | Poor performance in underrepresented groups | Diverse training and external validation |

| Lack of explainability | Black-box referral output | Heatmaps, interpretable models, clinician review |

| Hallucination | LLM invents advice or citation | Retrieval, source checking, human verification |

| Automation bias | Clinician over-trusts AI result | Training and override pathways |

| Equity gap | AI available only in affluent centers | Public health integration and low-cost deployment |

| Data drift | Falling performance over time | Continuous audit and recalibration |

| Liability uncertainty | Unclear responsibility for the missed diagnosis | Defined workflow and documentation |

Future Directions

- The next phase of ophthalmic AI is moving beyond single-task image classifiers toward foundation models, multimodal AI, large language models, and AI agents.

- Foundation models such as RETFound are trained on large-scale ophthalmic datasets and can be adapted to multiple downstream tasks with less labelled data than conventional models.

- Vision and vision-language foundation models may enable the combined interpretation of fundus photographs, OCT, OCTA, visual fields, clinical notes, and structured patient data, bringing AI closer to real clinical reasoning[12].

- Large language models may support patient education, documentation, referral letters, summarisation, triage, research assistance, and clinical decision support, but they require safeguards against unsafe recommendations.

- Future systems may combine imaging AI, language models, electronic health records, calculators, and guidelines into AI agents that support workflow from screening to referral and follow-up[12].

Clinical Takeaways

- Most ophthalmic AI tools remain assistive rather than fully autonomous and should be interpreted in the context of image quality, patient characteristics, clinical examination, and the population in which the model was validated.

- Foundation models, multimodal AI, large language models, and AI agents represent the newest phase of ophthalmic AI. However, widespread use requires prospective validation, bias assessment, privacy safeguards, clinician oversight, and post-deployment monitoring.

- In practice, AI should augment and not replace the ophthalmologist.

References

- ↑ 1.0 1.1 Kapoor R, Walters SP, Al-Aswad LA. The current state of artificial intelligence in ophthalmology. Surv Ophthalmol. 2019;64(2):233-240. doi:10.1016/j.survophthal.2018.09.002

- ↑ Akkara JD, Kuriakose A. Role of artificial intelligence and machine learning in ophthalmology. Kerala J Ophthalmol 2019;31:150-60. doi: 10.4103/kjo.kjo_54_19

- ↑ Ting DSW, Pasquale LR, Peng L, Campbell JP, Lee AY, Raman R, Tan GSW, Schmetterer L, Keane PA, Wong TY. Artificial intelligence and deep learning in ophthalmology. Br J Ophthalmol. 2019 Feb;103(2):167-175. doi: 10.1136/bjophthalmol-2018-313173. Epub 2018 Oct 25. PMID: 30361278; PMCID: PMC6362807.

- ↑ https://www.accessdata.fda.gov/cdrh_docs/reviews/DEN180001.pdf

- ↑ 5.0 5.1 Teng CW, Patel SD, Barkmeier AJ, Liu TYA, Myung D, Henderer J, Liu J, Hansen E, Al-Aswad LA. Autonomous Artificial Intelligence in Diabetic Retinopathy Testing-Lessons Learned on Successful Health System Adoption. Ophthalmol Sci. 2025 Sep 3;6(1):100935. doi: 10.1016/j.xops.2025.100935. PMID: 41140908; PMCID: PMC12553049.

- ↑ Zhou, Y., Chia, M.A., Wagner, S.K. et al. A foundation model for generalizable disease detection from retinal images. Nature 622, 156–163 (2023). https://doi.org/10.1038/s41586-023-06555-x

- ↑ Most, J.A., Folk, G.A., Walker, E.H. et al. Evaluating the clinical utility of multimodal large language models for detecting age-related macular degeneration from retinal imaging. Sci Rep 15, 33214 (2025). https://doi.org/10.1038/s41598-025-18306-1

- ↑ Rizwan Khan AY, Malik MB. Artificial Intelligence in Ophthalmology: Practical Applications, Subspecialty Evidence and Real-World Deployment. Cureus. 2025 Nov 5;17(11):e96121. doi: 10.7759/cureus.96121. PMID: 41200258; PMCID: PMC12587209.

- ↑ Kapoor R, Whigham BT, Al-Aswad LA. Artificial Intelligence and Optical Coherence Tomography Imaging. Asia Pac J Ophthalmol (Phila). 2019 Mar-Apr;8(2):187-194. doi: 10.22608/APO.201904. Epub 2019 Apr 18. PMID: 30997756.

- ↑ Lindegger DJ, Wawrzynski J, Saleh GM. Evolution and Applications of Artificial Intelligence to Cataract Surgery. Ophthalmol Sci. 2022 Apr 25;2(3):100164. doi: 10.1016/j.xops.2022.100164. PMID: 36245750; PMCID: PMC9559105.

- ↑ Jukić A, Pavan J, Kalauz M, Kopić A, Markušić V, Jukić T. Artificial Intelligence in Diabetic Retinopathy and Diabetic Macular Edema: A Narrative Review. Bioengineering (Basel). 2025 Dec 9;12(12):1342. doi: 10.3390/bioengineering12121342. PMID: 41463639; PMCID: PMC12729270.

- ↑ 12.0 12.1 12.2 Jin K, Yu T, Ying GS, Ge Z, Li KZ, Zhou Y, Shi D, Wang M, Goktas P, Grzybowski A. A systematic review of vision and vision-language foundation models in ophthalmology. Adv Ophthalmol Pract Res. 2025 Oct 24;6(1):8-19. doi: 10.1016/j.aopr.2025.10.004. PMID: 41532028; PMCID: PMC12794246.

- ↑ Eleiwa T, Elsawy A, Özcan E, Abou Shousha M. Automated diagnosis and staging of Fuchs' endothelial cell corneal dystrophy using deep learning. Eye Vis (Lond). 2020 Sep 1;7:44. doi: 10.1186/s40662-020-00209-z. PMID: 32884962; PMCID: PMC7460770.

- ↑ Lan CH, Chiu TH, Yen WT, Lu DW. Artificial Intelligence in Glaucoma: Advances in Diagnosis, Progression Forecasting, and Surgical Outcome Prediction. Int J Mol Sci. 2025 May 8;26(10):4473. doi: 10.3390/ijms26104473. PMID: 40429619; PMCID: PMC12111320.

- ↑ Martin KR, Mansouri K, Weinreb RN, et al. Use of Machine Learning on Contact Lens Sensor-Derived Parameters for the Diagnosis of Primary Open-angle Glaucoma. Am J Ophthalmol. 2018;194:46-53. doi:10.1016/j.ajo.2018.07.005

- ↑ Janardhanan, Anupama; Ravindran, Meenakshi; Fathima, Allapitchai; Pawar, Neelam. Artificial intelligence applications in pediatric ophthalmology: A comprehensive review. Journal of Clinical Ophthalmology and Research 13(3):p 378-383, Jul–Sep 2025. | DOI: 10.4103/jcor.jcor_11_25

- ↑ Yang Z, Wu D, Li J, Chen X, Liu L. Systematic review and meta-analysis of artificial intelligence models for strabismus screening: methodological insights and future directions. Int J Surg. 2025 Nov 1;111(11):8447-8461. doi: 10.1097/JS9.0000000000002916. Epub 2025 Jul 15. PMID: 40696939; PMCID: PMC12626631.

- ↑ Gao J, Fang N, Xu Y. Application of Artificial Intelligence in Retinopathy of Prematurity From 2010 to 2023: A Bibliometric Analysis. Health Sci Rep. 2025 Apr 18;8(4):e70718. doi: 10.1002/hsr2.70718. PMID: 40256143; PMCID: PMC12007426.

- ↑ Morse J, Oatts JT. Amblyopia screening: the current state and opportunities for optimization. Expert Rev Med Devices. 2025 Jan;22(1):1-4. doi: 10.1080/17434440.2024.2449490. Epub 2025 Jan 6. PMID: 39760640; PMCID: PMC11750595.

- ↑ Sachdeva K, Butt FR, Mihalache A, Nassrallah G, Muni RH, Popovic MM. A systematic review of artificial intelligence models in ocular tumour diagnosis. Can J Ophthalmol. 2026 Jun;61(3):518-527. doi: 10.1016/j.jcjo.2025.12.020. Epub 2026 Jan 31. PMID: 41548879.

- ↑ Cai Y, Zhang X, Cao J, Grzybowski A, Ye J, Lou L. Application of artificial intelligence in oculoplastics. Clin Dermatol. 2024 May-Jun;42(3):259-267. doi: 10.1016/j.clindermatol.2023.12.019. Epub 2024 Jan 4. PMID: 38184122.

- ↑ Biousse V, Newman NJ, Najjar RP, Vasseneix C, Xu X, Ting DS, Milea LB, Hwang JM, Kim DH, Yang HK, Hamann S, Chen JJ, Liu Y, Wong TY, Milea D; BONSAI (Brain and Optic Nerve Study with Artificial Intelligence) Study Group. Optic Disc Classification by Deep Learning versus Expert Neuro-Ophthalmologists. Ann Neurol. 2020 Oct;88(4):785-795. doi: 10.1002/ana.25839. Epub 2020 Aug 7. PMID: 32621348.

- ↑ Biousse V, Najjar RP, Tang Z, Lin MY, Wright DW, Keadey MT, Wong TY, Bruce BB, Milea D, Newman NJ; BONSAI Study Group. Application of a Deep Learning System to Detect Papilledema on Nonmydriatic Ocular Fundus Photographs in an Emergency Department. Am J Ophthalmol. 2024 May;261:199-207. doi: 10.1016/j.ajo.2023.10.025. Epub 2023 Nov 4. PMID: 37926337.

- ↑ Lin MY, Najjar RP, Tang Z, Cioplean D, Dragomir M, Chia A, Patil A, Vasseneix C, Peragallo JH, Newman NJ, Biousse V, Milea D; BONSAI (Brain and Optic Nerve Study with Artificial Intelligence) group. The BONSAI (Brain and Optic Nerve Study with Artificial Intelligence) deep learning system can accurately identify pediatric papilledema on standard ocular fundus photographs. J AAPOS. 2024 Feb;28(1):103803. doi: 10.1016/j.jaapos.2023.10.005. Epub 2024 Jan 10. PMID: 38216117.

- ↑ Khullar S, Morya AK, Aggarwal S, Gupta T, Priyanka P, Morya R. Ocular health in outer space and beyond gravity: A minireview. World J Clin Cases. 2026 Jan 26;14(3):117257. doi: 10.12998/wjcc.v14.i3.117257. PMID: 41608604; PMCID: PMC12835991.

- ↑ Ghamsarian N, El-Shabrawi Y, Nasirihaghighi S, Putzgruber-Adamitsch D, Zinkernagel M, Wolf S, Schoeffmann K, Sznitman R. Cataract-1K Dataset for Deep-Learning-Assisted Analysis of Cataract Surgery Videos. Sci Data. 2024 Apr 12;11(1):373. doi: 10.1038/s41597-024-03193-4. PMID: 38609405; PMCID: PMC11014927.

- ↑ Mueller S, Sachdeva B, Prasad SN, Lechtenboehmer R, Holz FG, Finger RP, Murali K, Jain M, Wintergerst MWM, Schultz T. Phase recognition in manual Small-Incision cataract surgery with MS-TCN + + on the novel SICS-105 dataset. Sci Rep. 2025 May 21;15(1):16886. doi: 10.1038/s41598-025-00303-z. PMID: 40399321; PMCID: PMC12095642.

- ↑ Chawla R, Karkhanis P, Shah M, Das A, Sharma R, Almaula D, Venkatesh P, Singh HV, Kumar M, Samanta R, Kumarl V, Shah A, Vadera B, Jain N, Sen A, Shreedhar S, Garg V, Dhaval S, Ganesh K, Rana S, Tandon R. Artificial intelligence for advancing eye care in resource-poor settings: Assessing the predictive accuracy of an AI-model for diabetic retinopathy screening in India. Glob Epidemiol. 2025 Jun 4;9:100209. doi: 10.1016/j.gloepi.2025.100209. PMID: 40538414; PMCID: PMC12177155.

- ↑ Reuters. Optomed Oyj, AEYE Health say portable device to detect eye issues gets FDA nod. 2024.

- ↑ Savastano MC, Rizzo C, Fossataro C, Bacherini D, Giansanti F, Savastano A, Arcuri G, Rizzo S, Faraldi F. Artificial intelligence in ophthalmology: Progress, challenges, and ethical implications. Prog Retin Eye Res. 2025 Jul;107:101374. doi: 10.1016/j.preteyeres.2025.101374. Epub 2025 Jun 3. PMID: 40473198.

- ↑ Yousef YA, Shdeifat A, Yousef L, Mohammad M, AlNawaiseh T, Abdullah L, Elfalah M, Sultan I, AlNawaiseh I. Artificial intelligence in ophthalmology: trust, bias, and responsibility from the perspective of medical students and ophthalmologists. Front Ophthalmol (Lausanne). 2026 Mar 3;6:1766974. doi: 10.3389/fopht.2026.1766974. PMID: 41852891; PMCID: PMC12991976.

- ↑ Tan, W., Wei, Q., Xing, Z. et al. Fairer AI in ophthalmology via implicit fairness learning for mitigating sexism and ageism. Nat Commun 15, 4750 (2024). https://doi.org/10.1038/s41467-024-48972-0

- ↑ Zhang Z, et al. Evaluating Large Language Models in Ophthalmology.J Med Internet Res 2025;27:e76947doi:10.2196/76947